Etsy has honestly become a terrible front for selling cheap overseas goods now. There’s no quality control at all, and no direct guardrails to ensure what you’re receiving isn’t just proxied junk from elsewhere.

- 9 Posts

- 851 Comments

13·2 days ago

13·2 days agoPieces of shit

11·3 days ago

11·3 days agoNo, that’s not what novel ideation is whatsoever 🤦

Again…these models work from a list of boundaries, logic, and rules made by humans. They don’t make it up themselves because…they.fucking.cant.

If they could make their own rules and conclusions without human intervention, then you have novel ideas. But…they.100%.FUCKING.CANT.DO.THAT.

37·4 days ago

37·4 days agoI mean…there’s been plenty of people making PoCs showing Graphene isn’t really THAT secure, it’s probably just more obscure to a point. They’re pissed the cops have to work at it, but even somebody using Samsung or Google tools to properly sandbox certain data has the same capability to do so AFAIK.

4·5 days ago

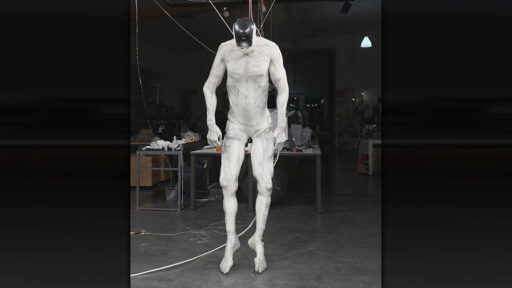

4·5 days agoNot only is this inaccurate, it still doesn’t make sense when you’re talking about a bipedal manufacturing robot.

Like motion capture, all you need to capture from remote operation of the unit is the input articulation from the operator, which is then translated into acceptable operation movements on the unit with input from its local sensors. The majority of these things (if using pre-cap operating data) is just trained on iterative scenarios and retrained for major environmental changes. They don’t use tele-operation live because it’s inherently dangerous and takes a lot of the local sensor inputs offline for obvious reasons.

OC is saying what all Robotics Engineers have been saying about these bipedal “PR Bots” for years: the power and effort to simply make these things walk is incredibly inefficient, and makes no sense in a manufacturing setting where they will just be doing repetitive tasks over and over.

Wheels move faster than legs, single purpose mechanisms will be faster and less error-prone, and actuation takes less time to train.

1324·6 days ago

1324·6 days agoRUST AGAIN.

Just throwing this out because I’ve been hammering this Rustholes up and down these threads who claim it’s precious and beyond compare 🤣

I will almost certainly link back to this comment in the future.

14·6 days ago

14·6 days agoRich assholes firing people to make the leftovers work twice as hard to “incorporate AI” into their workflow is though.

The job losses are fucking REAL, and everyone expects you to use this tedious bullshit now.

20·9 days ago

20·9 days agoDid it…not have that already? I swear it did, but honestly I thought Exchange was dead long ago.

7·9 days ago

7·9 days agoFrom your own linked paper:

To design a neural long-term memory module, we need a model that can encode the abstraction of the past history into its parameters. An example of this can be LLMs that are shown to be memorizing their training data [98, 96, 61]. Therefore, a simple idea is to train a neural network and expect it to memorize its training data. Memorization, however, has almost always been known as an undesirable phenomena in neural networks as it limits the model generalization [7], causes privacy concerns [98], and so results in poor performance at test time. Moreover, the memorization of the training data might not be helpful at test time, in which the data might be out-of-distribution. We argue that, we need an online meta-model that learns how to memorize/forget the data at test time. In this setup, the model is learning a function that is capable of memorization, but it is not overfitting to the training data, resulting in a better generalization at test time.

Literally what I just said. This is specifically addressing the problem I mentioned, and goes on further to exacting specificity on why it does not exist in production tools for the general public (it’ll never make money, and it’s slow, honestly). In fact, there is a minor argument later on that developing a separate supporting system negates even referring to the outcome as an LLM, and the supported referenced papers linked at the bottom dig even deeper into the exact thing I mentioned on the limitations of said models used in this way.

71·9 days ago

71·9 days agoIt most certainly did not…because it can’t.

You find me a model that can take multiple disparate pieces of information and combine them into a new idea not fed with a pre-selected pattern, and I’ll eat my hat. The very basis of how these models operates is in complete opposition of you thinking it can spontaneously have a new and novel idea. New…that’s what novel means.

I can pointlessly link you to papers, blogs from researchers explaining, or just asking one of these things for yourself, but you’re not going to listen, which is on you for intentionally deciding to remain ignorant to how they function.

Here’s Terrence Kim describing how they set it up using GRPO: https://www.terrencekim.net/2025/10/scaling-llms-for-next-generation-single.html

And then another researcher describing what actually took place: https://joshuaberkowitz.us/blog/news-1/googles-cell2sentence-c2s-scale-27b-ai-is-accelerating-cancer-therapy-discovery-1498

So you can obviously see…not novel ideation. They fed it a bunch of trained data, and it correctly used the different pattern alignment to say “If it works this way otherwise, it should work this way with this example.”

Sure, it’s not something humans had gotten to get, but that’s the entire point of the tool. Good for the progress, certainly, but that’s it’s job. It didn’t come up with some new idea about anything because it works from the data it’s given, and the logic boundaries of the tasks it’s set to run. It’s not doing anything super special here, just very efficiently.

74·9 days ago

74·9 days agoNah, I’m just not going to write a novel on Lemmy, ma dude.

I’m not even spouting anything that’s not readily available information anyway. This is all well known, hence everybody calling out the bubble.

93·9 days ago

93·9 days ago🤦🤦🤦 No…it really isn’t:

Teams at Yale are now exploring the mechanism uncovered here and testing additional AI-generated predictions in other immune contexts.

Not only is there no validation, they have only begun even looking at it.

Again: LLMs can’t make novel ideas. This is PR, and because you’re unfamiliar with how any of it works, you assume MAGIC.

Like every other bullshit PR release of it’s kind, this is simply a model being fed a ton of data and running through thousands of millions of iterative segments testing outcomes of various combinations of things that would take humans years to do. It’s not that it is intelligent or making “discoveries”, it’s just moving really fast.

You feed it 102 combinations of amino acids, and it’s eventually going to find new chains needed for protein folding. The thing you’re missing there is:

- all the logic programmed by humans

- The data collected and sanitized by humans

- The task groups set by humans

- The output validated by humans

It’s a tool for moving fast though data, a.k.a. A REALLY FAST SORTING MECHANISM

Nothing at any stage if developed, is novel output, or validated by any models, because…they can’t do that.

93·9 days ago

93·9 days agoI sure do. Knowledge, and being in the space for a decade.

Here’s a fun one: go ask your LLM why it can’t create novel ideas, it’ll tell you right away 🤣🤣🤣🤣

LLMs have ZERO intentional logic that allow it to even comprehend an idea, let alone craft a new one and create relationships between others.

I can already tell from your tone you’re mostly driven by bullshit PR hype from people like Sam Altman , and are an “AI” fanboy, so I won’t waste my time arguing with you. You’re in love with human-made logic loops and datasets, bruh. There is not now, nor was there ever, a way for any of it to become some supreme being of ideas and knowledge as you’ve been pitched. It’s super fast sorting from static data. That’s it.

You’re drunk on Kool-Aid, kiddo.

81·9 days ago

81·9 days agoAnimal brains have pliable neuron networks and synapses to build and persist new relationships between things. LLMs do not. This is why they can’t have novel or spontaneous ideation. They don’t “learn” anything, no matter what Sam Altman is pitching you.

Now…if someone develops this ability, then they might be able to move more towards that…which is the point of this article and why the guy is leaving to start his own project doing this thing.

So you sort of sarcastically answered your own stupid question 🤌

112·9 days ago

112·9 days agoLol 🤣 I’m SO EMBARRASSED. You’re totally right and understand these things better than me after reading a GOOGLE BLOG ABOUT THEIR PRODUCT.

I’ll never speak to this topic again since I’ve clearly been bested with your knowledge from a Google Blog.

114·9 days ago

114·9 days agoLLMs are just fast sorting and probability, they have no way to ever develop novel ideas or comprehension.

The system he’s talking about is more about using NNL, which builds new relationships to things that persist. It’s deferential relationship learning and data path building. Doesn’t exist yet, so if he has some ideas, it may be interesting. Also more likely to be the thing that kills all human.

18·9 days ago

18·9 days agoThis is code for “Hey government, you better be ready to bail us all out”.

They’re overpriced crap now. You buy at a 3x premium for the hardware, and you get the OS. That’s it.

51·11 days ago

51·11 days agoFramework has a refurb store with deep discounts. No need to buy new.

MSI is going to be second on the list because they’ll regularly replace things out of warranty if they have spare parts lying around, or sell you entire main boards at a steep discount if a laptop board goes bad out of warranty. Same with all other parts.

Fucking.WOW.

Sam Altman just LOVES answering stupid questions. People should be asking him about this in those PR sprints.